Three months. That’s all the time it took for two of us to build a production-grade, multi-agent AI chat app from the first line of code to a live launch.

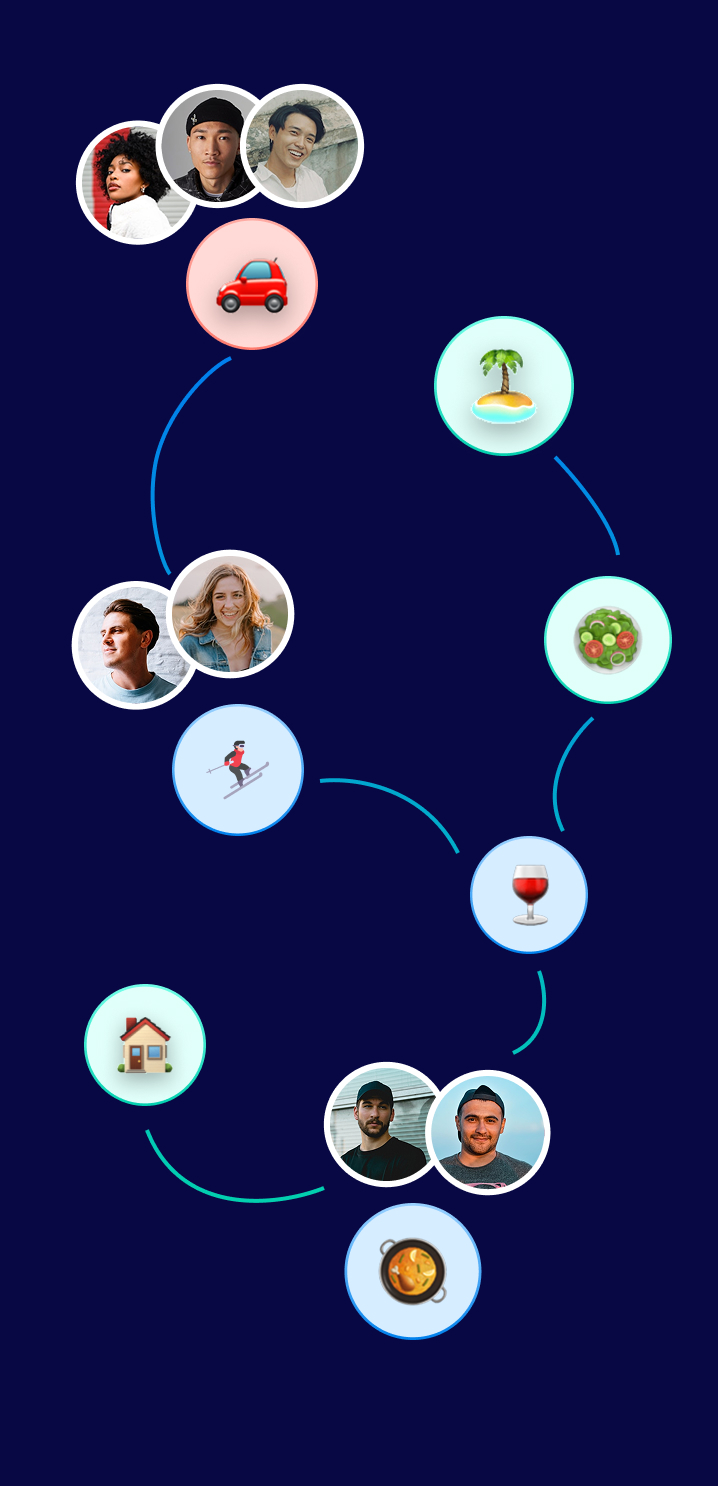

To quickly set the stage, we built "Conveen" : a chat-based AI concierge that understands individual and group preferences, then turns intent into decisions. No searching, comparing, or coordinating needed across other apps. Our agents capture what people like (and don’t), then reason across everyone in real time to deliver the best option for the group.

As "techies," our first instinct was to play with the shiny toys. We explored vibe coding tools like Lovable and Emergent—great for a weekend demo, but they don't hold up when real customers start knocking. We rigged up n8n automations inside a Telegram bot—fine for a prototype, but not for a business. We even tinkered with Supabase and OpenAI’s Agent canvas. While those are solid for small-scale apps, they started to feel like "daisy-chaining" once we looked at the complexity we were actually trying to solve.

Ultimately, we stopped trying to stitch together a Frankenstein’s monster of tools and settled on a unified stack: Google.

The Database Dilemma: Why Firebase Won

The clock started on January 1, 2026. By March 31, Conveen Group Chat was born.

Our first big hurdle was the data layer. Supabase has a sleek UI and great integrations, but for a chat app, the friction was real. Basic requirements like SMS verification were a manual chore to link up with providers. More importantly, building a chat app means managing "conversations," "participants," and "messages." In Supabase, these required explicit, rigid definitions.

We needed non-negotiables: offline resilience, push notifications, and real-time presence. Firebase handled Auth (SMS + 2FA), flexible schemas, and offline sync out of the box. Even something as small as a heartbeat typing indicator—essential for that "live" feel—was a breeze in Firebase’s Realtime Database. In contrast, every "typing..." update in a relational setup like Supabase often requires broadcasting a database hit.

By choosing Firebase, we stopped worrying about the plumbing and started focusing on what actually makes Conveen special: multi-agent, multi-variate reasoning.

The Data Moat: Choosing Gemini

Our goal was ambitious:

(1) Kill the "app-switching fatigue" of jumping between Yelp, OpenTable, and Resy just to answer a simple "where should we eat?" or "what should we do?" in the family group chat

(2) Bridge the gap between a standard LLM and real-world execution, ensuring that for any general ask, our AI responds like a friend in a text thread (concise and casual) rather than the "encyclopedia" style of apps like ChatGPT that hit you with a wall of text.

When you’re building a recommendation engine for literally anything, you have to look at the training data. Every model uses Common Crawl, but Google has a "data moat" that is hard to ignore. Google Places alone tracks over 250 million locations with deep metadata (sorry, Yelp, you don’t compare). While other models rely on Bing for live web grounding, let’s be real: when was the last time you used Bing to find a great local dinner spot?

Gemini was the clear choice for grounding our agents in the physical world.

The Orchestrator: Genkit vs. LangChain

We weren't building a simple wrapper; we were building a multi-agent harness that conducts complex reasoning between multiple agents and users to find the perfect group recommendation.

We chose Genkit, Google’s native AI framework, over LangChain. Yes, it’s the "new kid on the block," but the developer experience is miles ahead.

> The UI is stunning: You can inspect traces, test prompts with live inputs, and visualize execution flows without digging through endless log lines.

> Strongly Typed AI: It’s built on Zod and TypeScript. If the AI tries to hallucinate a response that doesn't fit your schema, the flow fails immediately.

> Production-Ready: Since we were already in the Firebase ecosystem, the telemetry, tracking latency and token usage was practically "turnkey."

Is it perfect? No. The documentation can lag, and we definitely spent some late nights combing through GitHub source code or Reddit to figure out specific provider implementations. It’s also a TypeScript-heavy world; if you’re a Python purist, moving to Genkit feels like moving to a different planet. But overall, Genkit feels like the MacBook of AI frameworks: it’s polished, stays in the ecosystem, and works beautifully if you use it the way it was intended.

The Finish Line

By combining Firebase, Gemini, and Genkit, we created a space where you can drop a request into a group chat—"find us a place for dinner"—and our AI agents do the heavy lifting. It analyzes the unique preferences of every participant (and the potential combinations of those preferences) to serve the one result that actually makes everyone happy.

Our beta users are already applauding the clean experience and the accuracy of the recommendations. It’s been a wild 90 days, but seeing these three technologies work together to solve the "where should we go?" argument once and for all? That’s the real win.

Curious to see what a "Google-native" AI stack looks like in the wild? Give Conveen a spin and see the reasoning in action.

Written by ConveenAI Founders

April 2026